\n About \n \n \n Contact \n \n \n Sponsor \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n \n Sponsored by:Īnd there's also some text from the footer: Home \n \n \n Workshops \n \n \n Speaking \n \n \n Media \n \n If you look at output now, you'll see that we have some things we don't want. # there may be more elements you don't want, such as "style", etc.įinally, here's the full Python script to get text from a webpage: Now that we can see our valuable elements, we can build our output: There are a few items in here that we likely do not want:įor the others, you should check to see which you want. Look at the output of the following statement:

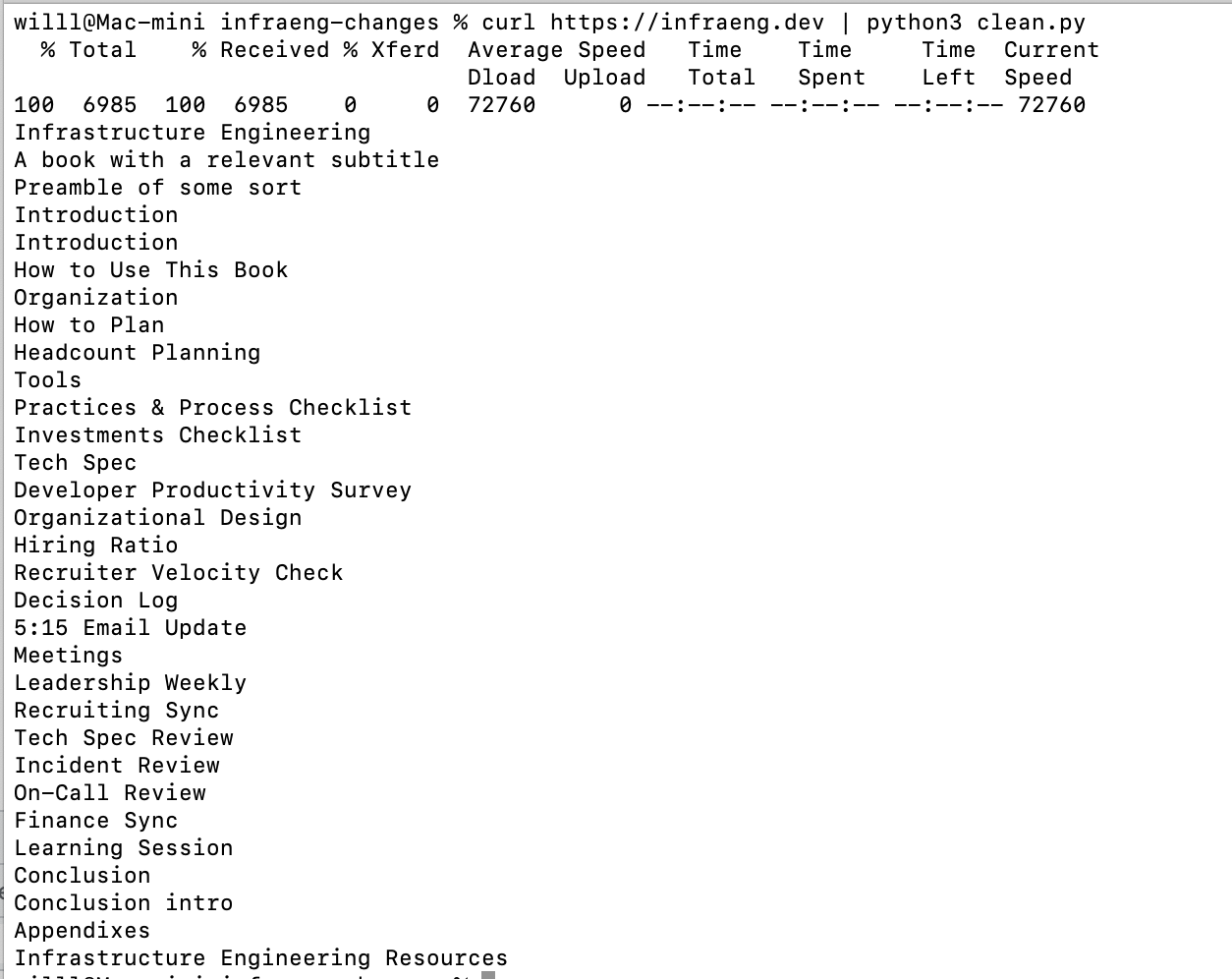

However, this is going to give us some information we don't want. Soup = BeautifulSoup(html_page, 'html.parser')īeautifulSoup provides a simple way to find text content (i.e. We'll use Beautiful Soup to parse the HTML as follows: How can we extract the information we want? Creating the "beautiful soup" but there will be a lot of clutter in there. I'll use Troy Hunt's recent blog post about the "Collection #1" Data Breach. If you're working in Python, we can accomplish this using BeautifulSoup. _extract_blocks() function needs to be defined before to_plaintext(), as it is called from there.If you're going to spend time crawling the web, one task you might encounter is stripping out visible text content from HTML.If the tag name matches one of our block elements, we will add it to the list.Inside the function, we recursively travel the element tree to find our block elements inside other elements (that are inside other elements and so on).The last thing is to define _extract_blocks() function that will take a root element and return all block elements that we are interested in: def _extract_blocks (parent_tag ) - > list : As _extract_blocks() will return a list of our block elements, we will take the text with get_text() function, strip them of left and right white space and concatenate together, separating them with a single new line.We called a helper function _extract_blocks(), passing it a root HTML element to work with – the HTML body.When initializing BeautifulSoup, we can choose which HTML parser will be used to parse the string, so we chose our installed lxml package.Soup = BeautifulSoup (html_text, features = "lxml" )Įxtracted_blocks = _extract_blocks (soup.

Our main function to_plaintext(html_text: str) -> str will take a string with the HTML source and return a concatenated string of all texts from our selected blocks: def to_plaintext (html_text : str ) - > str :

I have picked p for paragraphs, h1-h5 for headings and blockquote for quotes as an example: from bs4 import BeautifulSoupīlocks = Now we will import Beautiful Soup’s classes for working with HTML: BeautifulSoup for parsing the source and Tag which we are going to use for checking whether a particular element in the parsed BeautifulSoup tree represents an HTML tag.īesides the necessary imports, we will also define a list of block elements that we want to extract the text from. So to start off, let’s install beautifulsoup4 package and lxml parser (this is a fast parser that can be used together with BS): # install using pip We will do it with Python and Beautiful Soup 4, a Python library for scraping information from web pages. In this article I will demonstrate a simple way to grab all text content from the HTML source so that we end up with a concatenated string of all texts on the page. There are many different ways to extract plain text from HTML and some are better than others depending on what we want to extract and if we know where to find it. Articles About me How to extract plain text from an HTML page in Python

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed